Thresholds

Overview

Thresholds determine at what point a metric indicates a problem. Once a metric has exceeded its thresholds in either direction, Bigeye will send an alert to the contacts specified in the metric itself or in any SLAs that the metric is a part of.

There are a few different kinds of thresholds that can be used in Bigeye:

Autothresholds

Bigeye uses thresholds to alert data teams to anomalies that need attention. While Bigeye users can apply their thresholds using methods such as standard deviation or constants, Autothresholds uses a proprietary machine-learning engine to generate thresholds for every data attribute you track automatically, giving you meaningful and actionable alerts with zero manual effort.

Autothresholds learn from your historical data, factors in seasonality and trend, and can adapt to natural changes in your data over time.

Autothresholds are periodically calculated using the following process:

- Analyze the underlying structure of the series with some preliminary statistical tests.

- Perform a blind prediction test with various techniques and select the most accurate.

- Analyze past data to develop a model for the uncertainty of future values.

- Integrate forecasts, information about the underlying structure, and uncertainty to calculate boundaries for the limits of “expected” behavior.

- Adjust the expected range for user settings, such as sensitivity and bounds.

Configure Autothresholds

Set the training history and training frequency for autothresholds to get the desired alerts.

Training history

Set the number of days of history that Bigeye’s Autothresholds must use to generate thresholds. To configure the training window, navigate to Advanced Settings > Autothresholds > Autothresholds training history and enter the days. The default training window is 21 days, which indicates all observed metric values in the past 21 days are used in model training.

When you enter larger numbers for Autothresholds training history, you include more history to determine trends. If this number exceeds your autometrics.backfill.days number, the metric needs additional time to generate thresholds.

Note that Bigeye can automatically backfill metric history if there is a row creation time set on the table. This enables Autothresholds immediately without waiting to build a training period. If there is no suitable row creation time for the table or the backfill is unavailable, the application generates Autothresholds after the metric has a sufficient amount of training data.

Authothresholds training frequency

Autothresholds periodically update to reflect the latest changes in your data, adding new data to the training history. In addition, Autothresholds are refreshed on a schedule to adapt to changes in data and to maximize accuracy.

To configure the autothresholds training frequency, navigate to Advanced Settings > Autothresholds > Autothresholds training frequency and set the cadence. Under default settings, Autothresholds pull in new data and retune models every 24 hours.

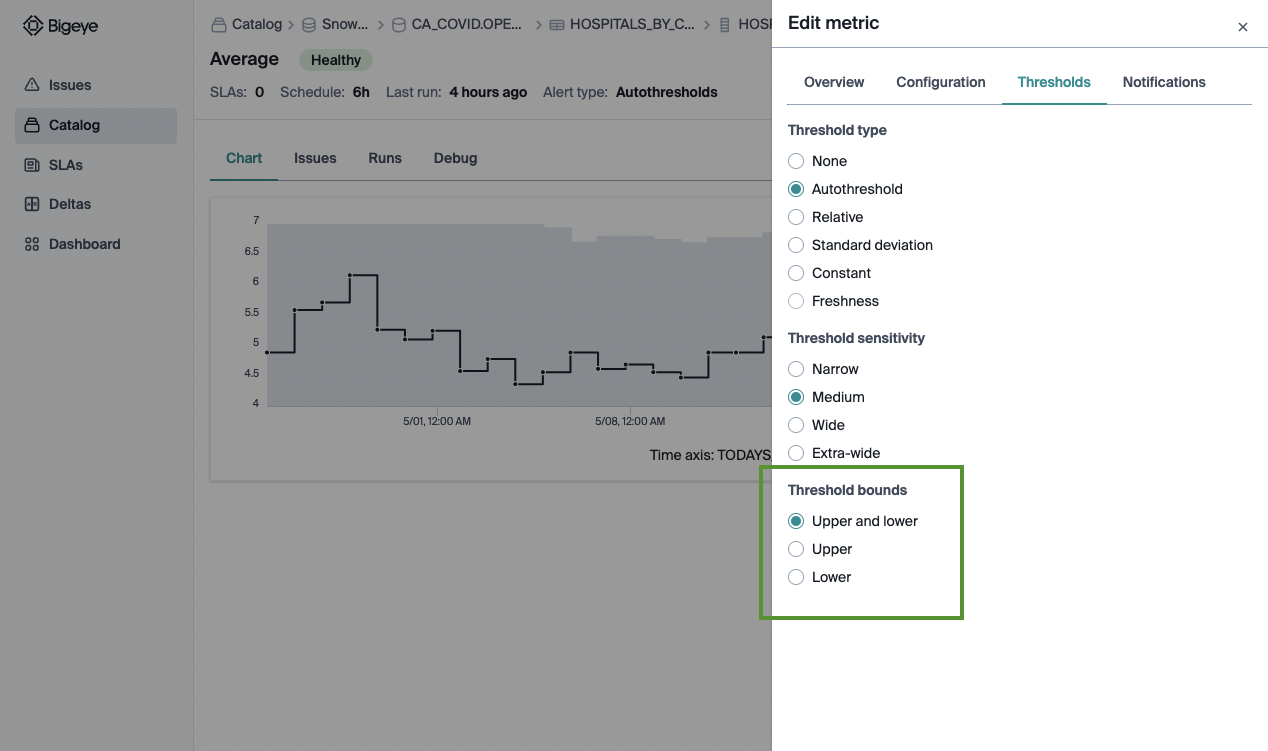

Adjust Autothresholds' Sensitivity

Autothreshold sensitivity can be adjusted after metric creation in case the metric is alerting too often or not enough. If the threshold is set wide or extra wide, it increases the bounds and produces fewer alerts; thresholds set too narrow decrease the bounds and alert more frequently.

To adjust autothreshold sensitivity, select the relevant metrics under Catalog, and then select Edit from the Action dropdown. In the Edit metric modal, click Thresholds to set the sensitivity to any of these values:

- Narrow

- Medium

- Wide

- Extra-wide

Set threshold bounds

Sometimes, you only want to track the upper or lower bounds for a metric. For example, freshness metrics are upper bound only by default - meaning they alert when the data is late but not if it is delivered earlier than usual. Similarly, for some columns, you may not care if the percent of NULL values drops, but you do want to be notified if it increases. You can adjust autothreshold bound settings to upper and lower, upper only or lower only when editing a metric.

Seasonality

Autothresholds detect and fit against seasonal patterns during the preliminary analysis of metric history and during blind-prediction model selection, integrating industry-leading and proprietary statistical and ML techniques.

Autothresholds follow classical statistical forecasting, which requires three or more cycles of a pattern to make strong inferences about seasonal patterns. For example, if you want to model the difference between Friday and Saturday web traffic, at least three weeks' worth of data must be within the training history.

This is partly why, by default, Autothresholds include three weeks of data in training history.

User Feedback

Users can provide feedback on autothreshold predictions when resolving issues in Bigeye. These annotations affect autothreshold sensitivity and training data so that autothresholds can better match business expectations over time.

If an issue is a false positive or considered normal, the user can indicate that by closing the issue as a “bad alert.” In these cases, autothresholds adapt to consider those points as expected behavior. Specifically, the previously alerting points are included in the training history, and the sensitivity is adjusted up one level.

If the issue accurately reflects a significant change in the underlying data, the user can indicate that by closing the issue as a “good alert.” They can specify whether it is the new normal moving forward by selecting “adapt thresholds,” in which case the previously alerting points are included in the training history. Alternatively, users can indicate they expect data to return to previous values by selecting “do not adapt thresholds,” in which case the alerting points are excluded from training history.

Custom Thresholds

Bigeye offers a variety of threshold types to ensure your monitoring covers all business requirements for your data. These threshold types can be used on Bigeye’s provided metrics or any template metrics you create.

While Autothresholds provide automatic anomaly detection, the following threshold types can be helpful for meeting specific monitoring requirements.

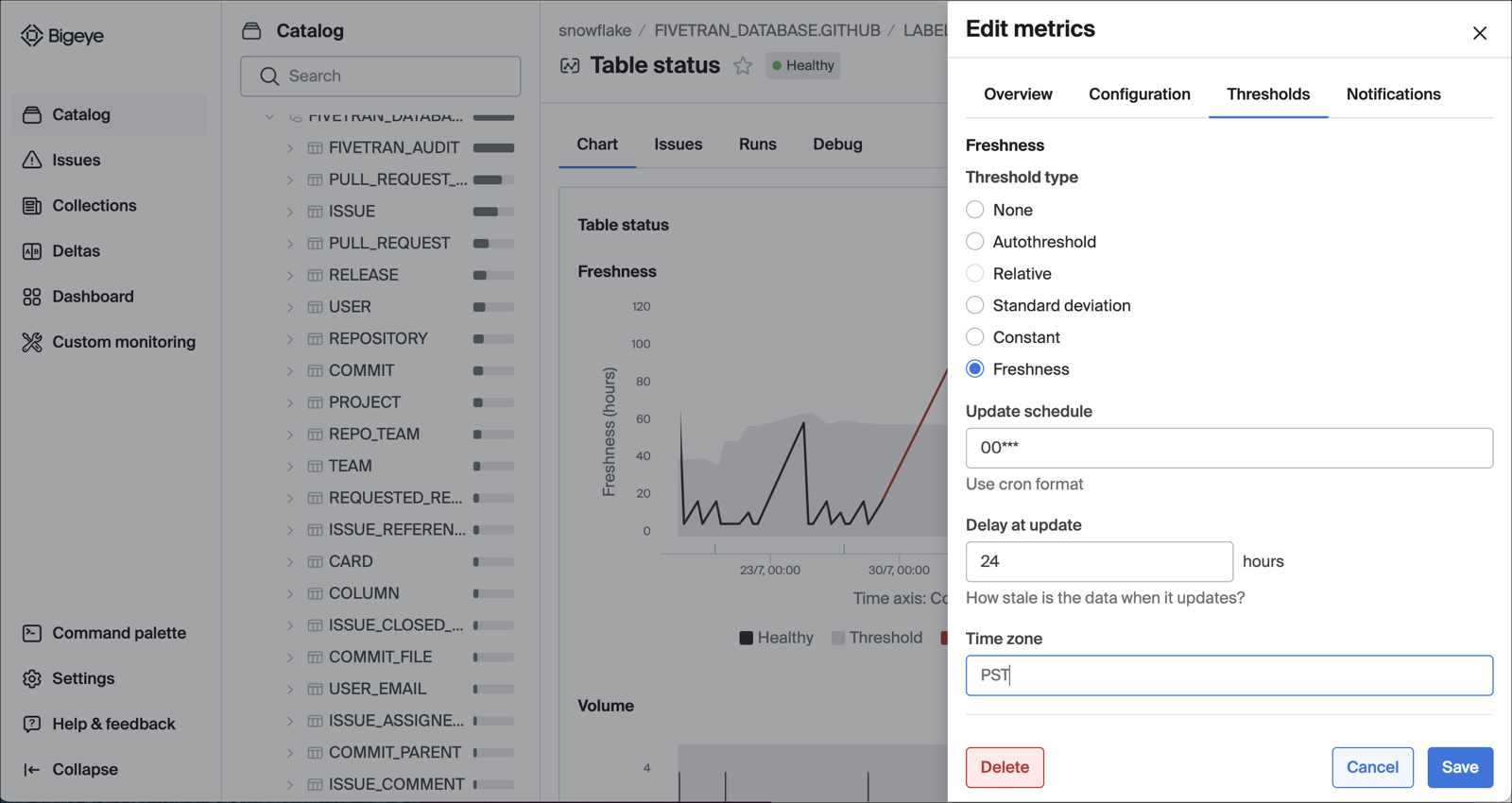

To update a metric’s threshold type:

- Navigate to the relevant metric.

- Select Edit metric and click the Thresholds tab.

- Select a Threshold type from the listed options:

- None

- Constant

- Relative

- Standard deviation

- Freshness

None

Select None as the threshold type when the metric is only for debugging purposes and you do not want notifications. On scheduled metric runs, the metric will query for the observed value but not provide an expected range or alert.

Constant

Constant thresholds are useful when there is a specific business logic that can be asserted for the values in the column. For example, if you know that a number stored in the database should be a percentage, the numeric value would need to be between 0 and 100 at all times.

The metric alerts when the value computed is outside of the specified bounds. You can provide an upper bound only, a lower bound only, or both for a specific range.

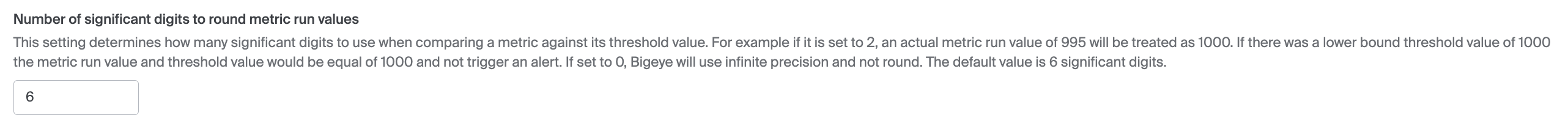

Thresholds are inclusive and by default we round to 6 significant digits for comparison. If you want to change this behavior, please modify this setting under 'Advanced Settings'.

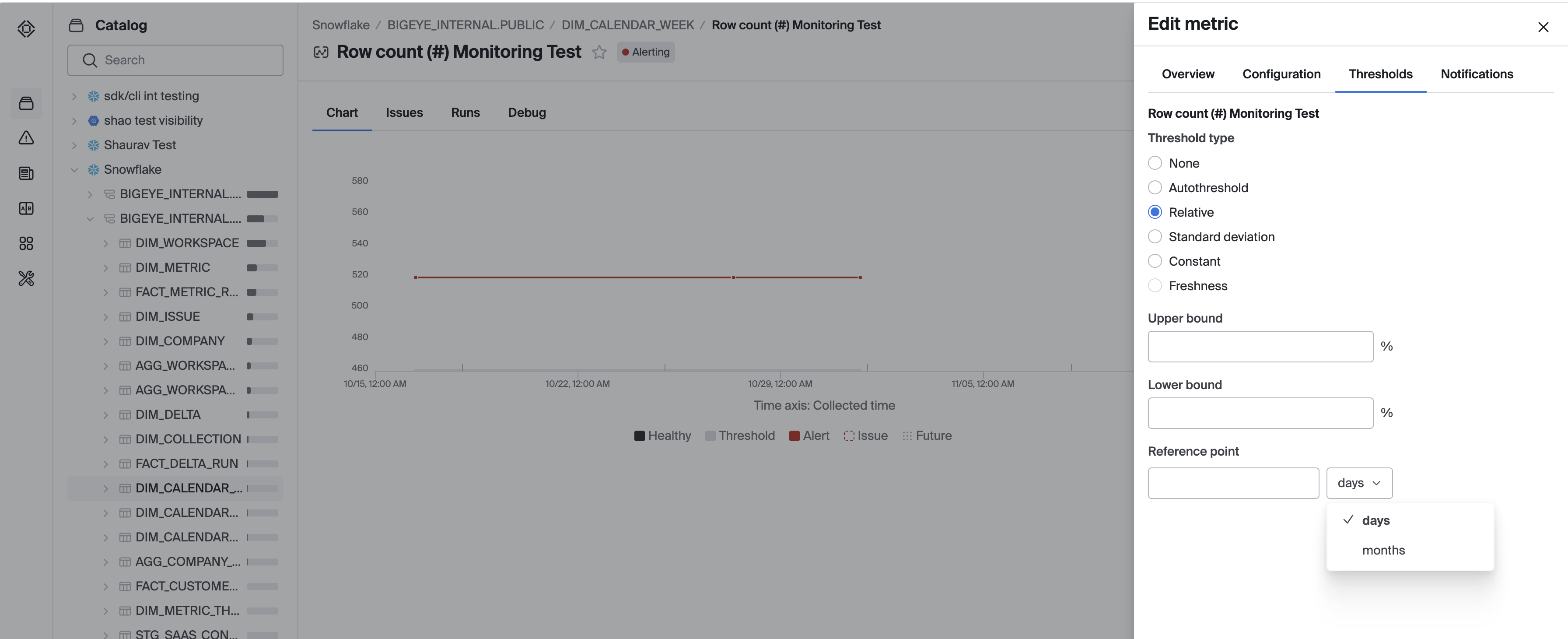

Relative

Relative thresholds are designed for period-over-period comparisons.

Enter the Reference point as the number of days or months that you want to look back. For example, if you want a week-over-week comparison, enter 7 days. Upper bound and Lower bound represent the acceptable percent increase and decrease from the reference point. You can provide an upper bound only, a lower bound only, or both, depending on your needs.

Note that the observed value of the previous metric runs closest in time to the exact reference point used for the calculation.

Monthly Relative

For some use cases, data values change at specific dates during a month, rather than at regular intervals for a set number of days. For example, financial data often is updated on the 1st and 15th of each month. For these use cases, monthly relative thresholds will work better than a relative threshold based on a set number of days.

To use a monthly relative threshold, simply select "months" instead of "days" from the dropdown menu. The number input will then be a number of months, rather than a number of days.

Note that, for dates that don't have an exact match in the target month, the monthly relative threshold will use the last day of the month. For example, if a monthly relative threshold is used with 1 month as the period, March 29-31 will all have their thresholds calculated based on the data from February 28.

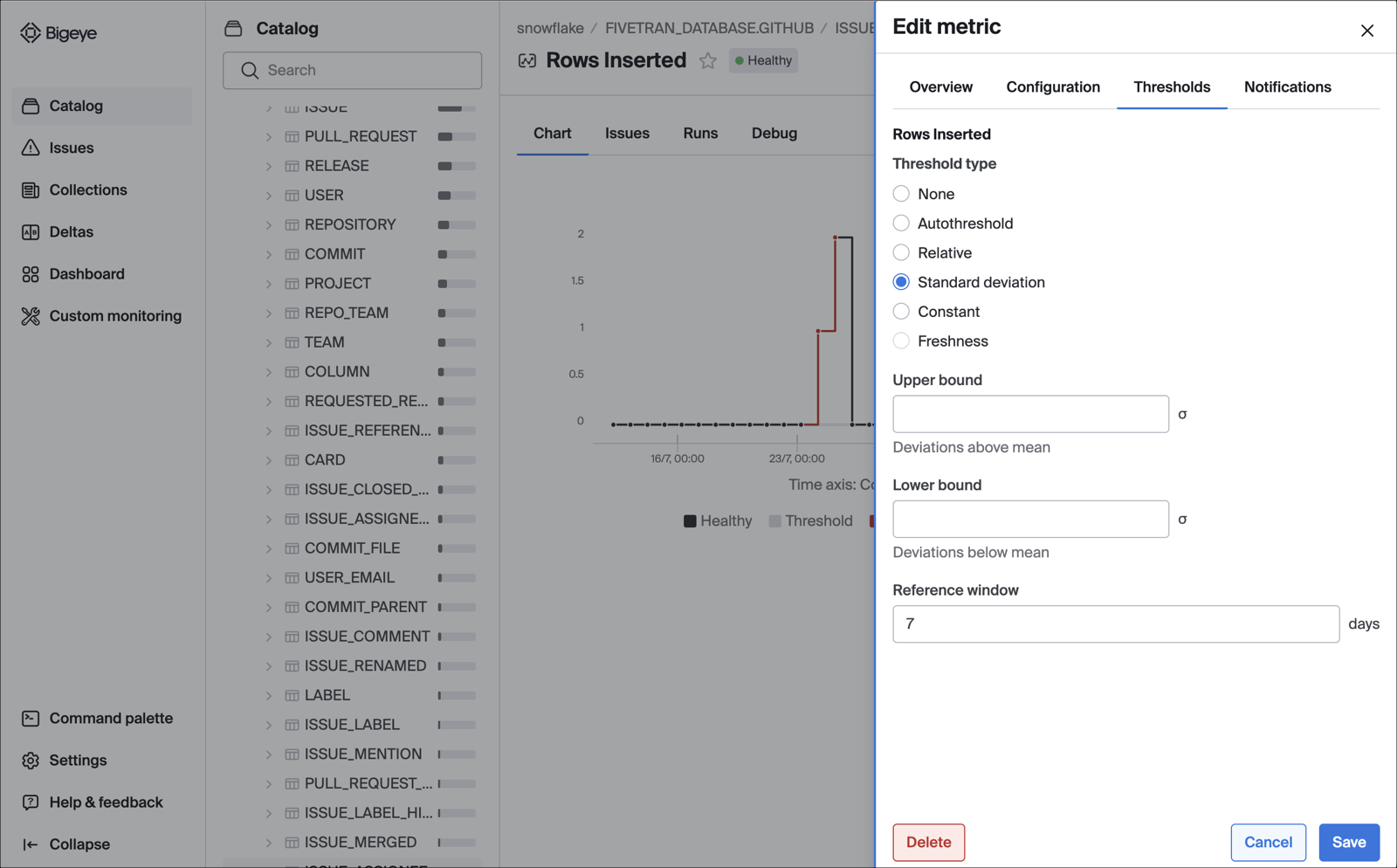

Standard Deviation

Standard deviation thresholds alert when data crosses a set deviation from the mean.

Choose a Reference window to calculate the mean. For example, a reference window of 7 days includes all metric runs in the past seven days to calculate the mean. Set the Upper bound and Lower bound as acceptable deviations above and below the mean. You can provide an upper bound only, a lower bound only, or both, depending on your needs.

Freshness

Freshness thresholds are applicable to hours since the latest value and freshness metric types.

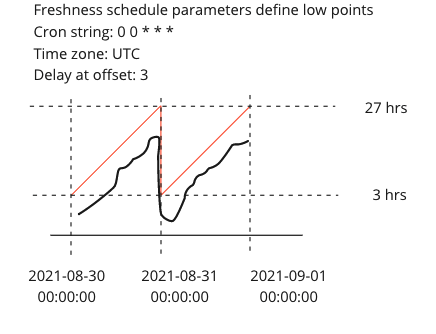

Freshness schedule thresholds are designed for infrequently-updated tables that should be loaded on a schedule.

The update schedule determines when the lowest point of the check should be, specified in Cron Formatting.

The timezone is specified either as full name (America/Los Angeles), hour offset (-0800), or abbreviation (PST).

Delay at update is the minimum value that the check will have, specified in hours.

The threshold increases from that point until the next execution of the cron schedule.

Example

Updated 7 months ago