Connect GCP BigQuery

Connect your GCP BigQuery source to Bigeye.

- Basic setup: Follow the basic setup if your data and ingest jobs are in the same project and you want your Bigeye metric queries to run in the same project.

- Advanced setup: Follow the advanced setup if you have multiple projects and require more fine-grained control over permissions granted on different projects.

Basic setup

This setup assumes that your data and ingest jobs are in the same project and that you also want your Bigeye metric queries to run in the same project.

Create a service account in BigQuery

- In your Google Cloud Platform console, under IAM & Admin, navigate to the Service Accounts section.

- Click the Create Service Account button at the top of the tab.

- Enter the account name. As a best practice, you can name it Bigeye.

- Assign the following roles to the created account:

BigQuery Data Viewer

BigQuery Job User

BigQuery Metadata Viewer

BigQuery Resource Viewer- Once the service account is created, create a key by clicking Manage keys, Add key, Create new key.

- Select JSON as the type and click Create. Save the downloaded JSON file.

Add BigQuery service account as a new data source in Bigeye

-

On the Catalog page, click Add Source and then select BigQuery from the Choose a data source section. Click Next to configure the connection to your database.

-

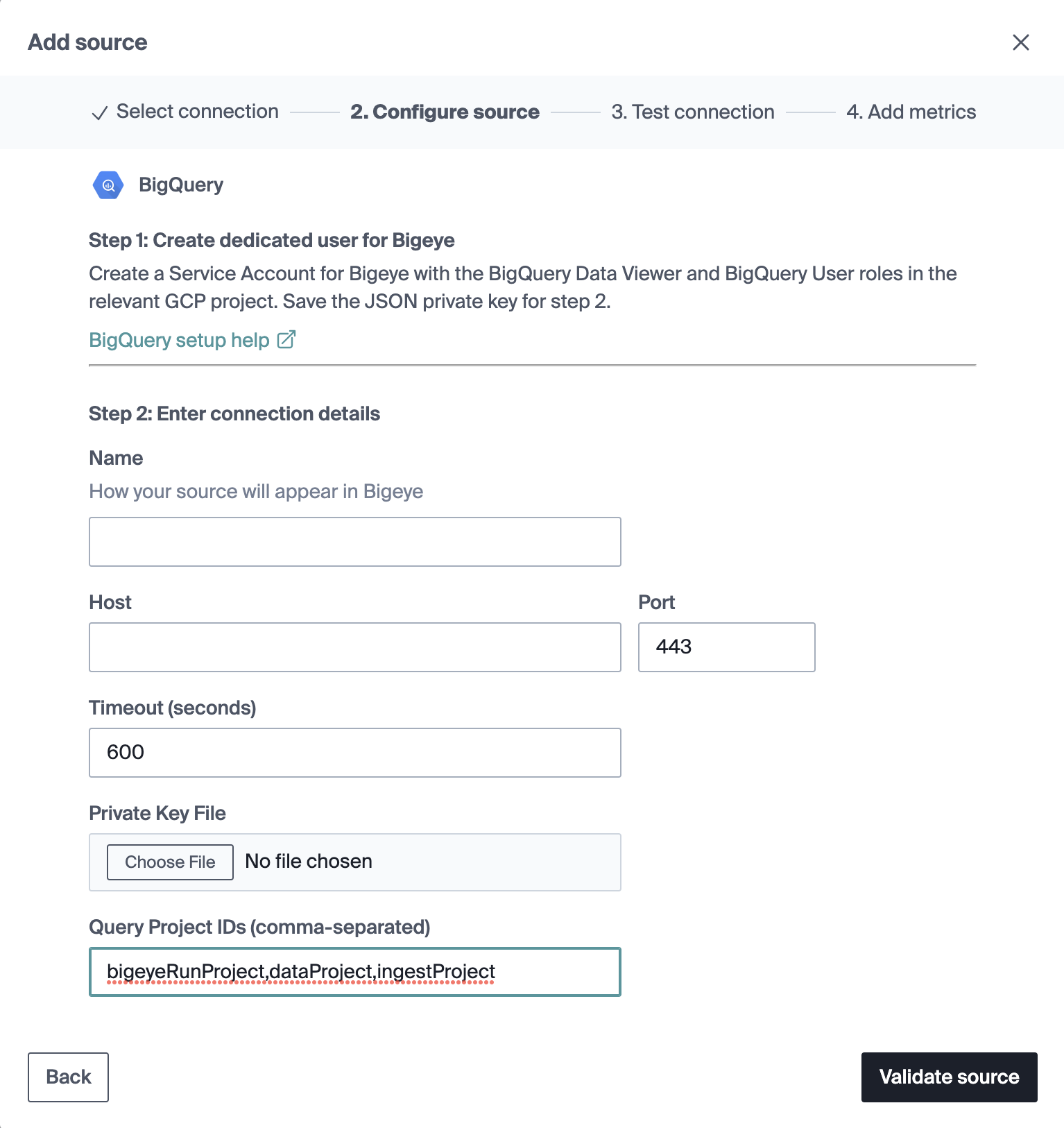

On the Configure source modal that opens, enter the following details:

Field Name Description Name Alias for this data source in Bigeye. Host BigQuery host URL. Timeout The default timeout for Bigeye queries. Private Key File Upload the file created from the BigQuery key. Target BigQuery Project ID Optional field for advanced setup. -

Click Validate Source.

-

Test connection: This step queries your warehouse to ensure that the user you provided has the permissions necessary to read data and run all Bigeye metric types. If the test connection returns an error, return to the GCP console and ensure the service account is permissioned correctly per the above instructions.

-

Add metrics: After the test connection is successful, you can deploy Metadata Metrics on your schemas. Bigeye recommends broad coverage of these metrics across your warehouse to detect the most common data quality issues.

-

Once you’ve added your source, Bigeye profiles your data to recommend autometrics. It can take up to 24 hours for this process to complete. Proceed to the Getting Started topic for next steps.

Advanced setup

This section is for users with a multi-project BigQuery setup and allows for more fine-grained control over permissions granted on different projects. In the basic use case above, your data and ingest jobs are in the same project, and you also want your metric queries to run in the same project. At a more granular level, there are three sets of permissions that are required for different purposes, potentially on different projects:

- Job creation permissions are required on the project where you want metric queries from Bigeye to run.

- Data and metadata viewer permissions are required on the project(s) where your tables and data reside.

- Resource viewer permissions are required on the project(s) where your ingest jobs run.

GCP configuration

Configure the project where you want Bigeye to run its metrics

- In the top bar to the right of the Google Cloud logo, click Project Selector, and select the project where you want your Bigeye metrics to run.

- Under IAM & Admin, navigate to the Service Accounts section.

- Click the Create Service Account button at the top of the tab.

- Enter the account name. As a best practice, you can name it Bigeye. Copy the email of the service account for later use. Click Done.

- Under IAM & Admin, navigate to the IAM section, and click Grant Access above the list of principals.

- Paste the service account's email in the Add Principals textbox, and assign the following roles to the created account.

BigQuery Job UserConfigure the projects where you have data that you want to be monitored

- In the top bar to the right of the Google Cloud logo, click Project Selector, and select the project where you have data that you want Bigeye to monitor.

- Under IAM & Admin, navigate to the IAM section, and click Grant Access above the list of principals.

- Paste the service account's email in the Add Principals textbox, and assign the following roles to the created account.

BigQuery Data Viewer

BigQuery Metadata Viewer- Repeat steps 7-9 for each project where you have data that you want Bigeye to monitor.

Configure the projects where you read or write data

- In the top bar to the right of the Google Cloud logo, click Project Selector, and select the project where you read or write data - these projects calculate lineage, popularity score, as well as freshness, volume, and read query metrics.

- Under IAM & Admin, navigate to the IAM section, and click Grant Access above the list of principals.

- Paste the service account's email in the Add Principals textbox, and assign the following roles to the created account.

BigQuery Resource Viewer- Repeat steps 11-13 for each project where you read or write data.

- In the top bar to the right of the Google Cloud logo, click Project Selector, and select the project you selected in step 1.

- Under IAM & Admin, navigate to the Service Accounts section.

- Create a key by clicking Manage keys, Add key, Create new key. Select JSON as the type and click Create. Save the downloaded JSON file.

Bigeye configuration

When adding your BigQuery data source in Bigeye, you must make it aware of the projects from the above section. Specifically, you must list: all of the projects where you have data that you want Bigeye to monitor and all of the projects where you read or write data. This configuration is done during the Add source flow, as shown below.

By default, the system populates the project the Bigeye user has entered in that field if none is set. Otherwise, the system looks at the query history for each project in that comma-separated list and uses it to look for queries for metrics and lineage.

Updated 7 months ago